- May 17, 2023

- Posted by: KARTHIK RANGAMANI

- Categories: Application Engineering, Cloud Engineering

Introduction

In today’s digital age, businesses rely heavily on cloud computing infrastructure to enable efficient and scalable operations. Google Cloud Platform offers a powerful set of tools to manage and deploy virtual machines (VMs) across a distributed network. However, ensuring the security and seamless intercommunication of these VMs can be challenging. In this article, we will explore how to enable intercommunication of distributed Google Virtual Machines via a secured private network, providing a solution to this problem.

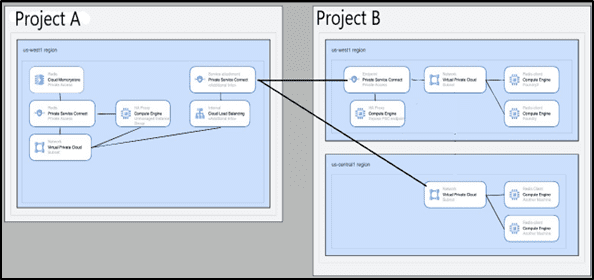

Let’s take a closer look at the current situation. One of our clients requested multitenant support for their newly launched application(s), which enables all of their customers’ sentiments to be converted from text to speech, for their end users. The real difficulty lay in connecting the various services found in various VPCs while providing multitenant support for the customers who were spread out geographically. At first, we thought VPC peering might be the best way to connect multiple VPC that are in different regions, but we later discovered the main challenges with the peering, which are:

- No overlap IPs accepted.

- Limitation per project which is maximum 50 peering can be done in a single project, but the client holds more than 70+ customers in their production project.

After researching, we identified Private Service Connect (PSC)is the enabler, for a quicker solution. The same has been communicated to the Client Team and the solution has been implemented in the Client Environment.

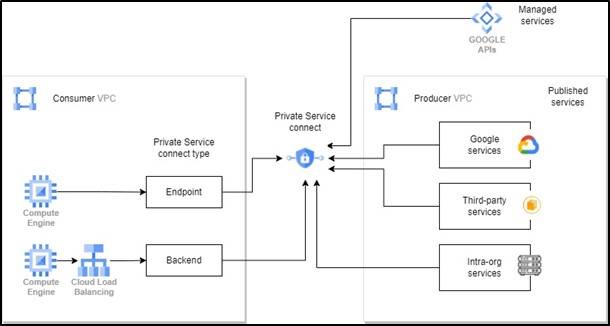

The illustration below demonstrates how Private Service Connect routes traffic to managed services, such as Google APIs and published services, by allowing traffic to endpoints and backends.

Introduction to Google Private Service Connect

Google Cloud networking provides Private Service Connect, which enables users to access manage services privately within their VPC network. Moreover, this feature enables managed service providers to host these services in their individual VPC and provide private connection to their users.

By this way the users can access the services using their internal IP addresses, eliminating the need to leave their VPC networks or use external IP addresses where all the traffic remains within Google Cloud granting precise control over the way services are accessed.

Managed services of various types are supported by Private Service Connect, including the following:

- Published VPC-hosted services, which comprises the following:

- GKE control plane by Google

- Third-party published services like Databricks, Snowflake are made available through Private Service Connect partners.

- Intra-organization published services, which enables two separate VPC networks within the same company to act as consumer and producer respectively

- Google APIs, like Cloud Storage or Big Query are also included.

Features

Private Service Connect facilitates Private connectivity has salient features, such as:

- The Private Service Connect is made to be service-oriented; the producer services are made available to the public through load balancers that only reveal one IP address to the consumer VPC network. By using this method, consumer traffic to producer services is assured to be one-way and limited to the service IP address, rather than gaining access to the entire peered VPC network.

- Provides a precise authorization model that allows producers and consumers to exercise fine-grained control. Due to the guarantee that only the intended service endpoints can connect to the service, any unauthorised access to resources is prevented.

- Between consumer and producer VPC networks, there are no shared dependencies. There is no need for IP address coordination or any other shared resource dependencies because NAT is used to facilitate traffic between them. Because of their independence, managed services can be deployed quickly and scaled as needed.

- Enhanced performance and bandwidth by directing traffic from consumer clients to producer backends directly, without any intermediary hops or proxies. The physical host machines that house the consumer and producer VMs are where NAT is directly configured. The bandwidth capacity of the client and server machines that are directly communicating sets a limit on the bandwidth available.

Also read: How to Secure an AWS Environment with Multiple Accounts

Step by step guide

1. Create a new project- shared-resource-vpc to maintain Redis as centralized service across multiple projects

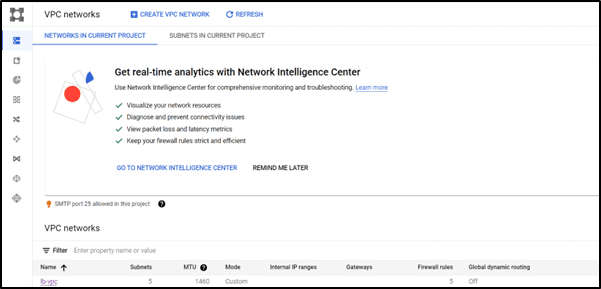

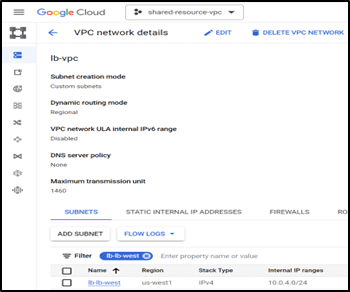

2. VPC Creation: A new VPC named lb-vpc created in the US-West region

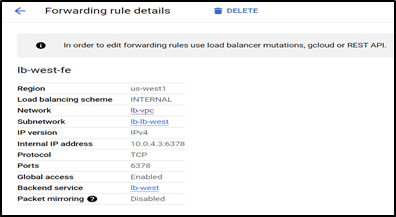

For instance, assuming all the customers of the client use the default IP range of 10.0.3.0/24. To avoid an overlapping ip, created a new subnet (lb-lb-west) with the range 10.0.4.0/24 in the shared-resource-vpc project. The subnet is created in the US-West region assuming all the VM in the project is present in the West region, this is because Private service connect only allows inter-region connections, whereas VPC peering allows multi- region connection.

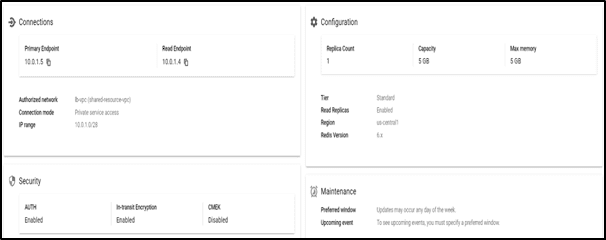

3. Redis is created in the Standard mode under the shared-resource-VPC project using the lb-vpc along with securities like Auth and TLS enabled.

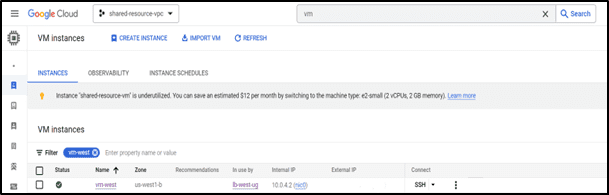

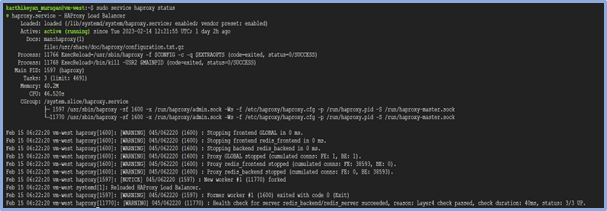

4. A new VM is created in the shared-resource-VPC project, and installed HA Proxy to route traffic of the Redis machine

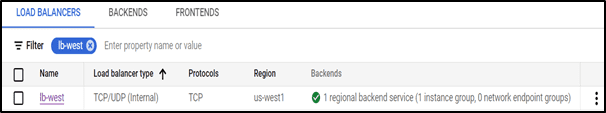

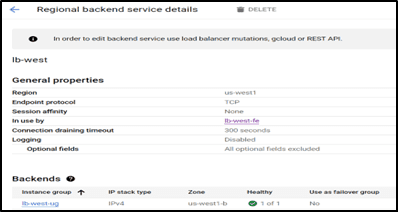

5. Internal Load balancer is used to manage the service connection across the project, new TCP LB created in the Shared-resource-VPC project named lb-west.

Configured the backend with 6378 ports enabled to communicate with the proxy machine and the frontend machine used to forward the rule with an Ip.

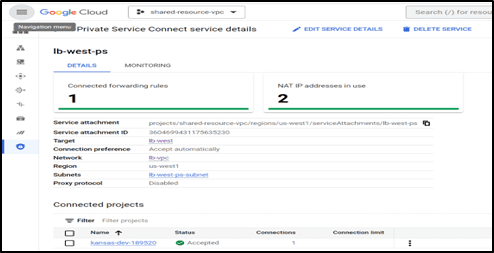

6. Private service Connect is used to configure the Publisher and the receiver connection, in our case the publisher is the shared-resource-VPC and the receiver is the Kansas-dev-18950 project.

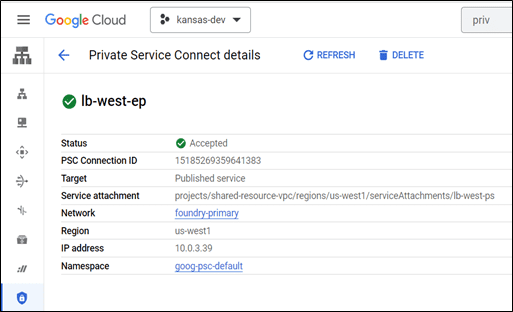

After creating the private service connect in the publisher machine the service Attachment ID has to be used in the end point connection to establish the connection between the two projects.

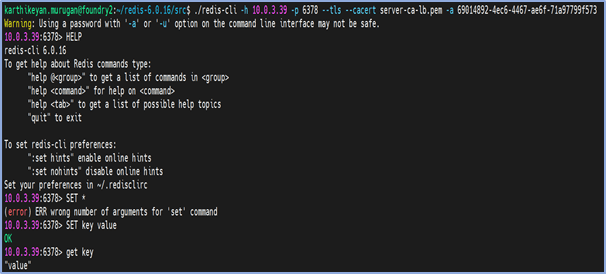

7. Testing the connection from the foundry2 machine to Redis memory store present in the Kansas-dev project, we now use the private service endpoint to connect to the Redis machine along with TLS certificate attached in the foundry2 VM.

Benefits

PSC brings in a plethora of benefits to the customers who have heavy customer base at disparate locations.

- Seamless connectivity to businesses in distributed locations with a better user experience especially for SaaS application users

- Consumers can now access Google’s services directly over Googles backbone network which is more robust and has no latency.

- PSC insulates customer’s traffic from the public internet, creating a secured private network for transmitting any data without being decoded by intruders

- All services are now accessible via end points with private IP addresses, eliminating need for proxy servers

- Affordable pricing – VM to VM egress when both VMs are in different regions of the same network using internal or external IP addresses, costs less than a Cent (0.01 USD between US and Canada)

Are you still not sure on how to Secure your distributed Google Virtual Machines by enabling intercommunication via a private network? Contact us we are here to help you.

Click here

Conclusion

In conclusion, the secure intercommunication of distributed Google Virtual Machines via a private network is a crucial step in ensuring the efficient and scalable operation of cloud computing infrastructure. With the right tools and best practices in place, businesses can take advantage of the power of Google Cloud Platform while ensuring the security of their data and operations. By following the guidelines provided in this article, organizations can confidently deploy and manage their virtual machines across a distributed network, achieving seamless intercommunication and network security.