Data and Analytics Services

From gut feeling to informed action

Whether you’re navigating market trends, refining customer experiences, optimizing operations, or innovating with limited resources, we bridge the gap between raw data and actionable insights, offering a personalized approach that resonates with executives, marketers, operational teams and end-consumers alike.

The ‘you and I connect’ is at the heart of our services – a partnership where we ask, ‘How can we help?’ and then proceed to turn your data into your greatest North Star, steering your business toward accelerated value.

Leaders Who Trust Us

We recently won the nod from Everest Group as a ‘Major Contender’ in the Data and Analytics Services PEAK Matrix report.

Challenges and solutions

Here's what keeps us up at night!

Disconnected data sources hinder comprehensive analysis.

Outdated infrastructure limits data accessibility and integration.

Protect sensitive information from breaches and unauthorized access.

Inability to scale data operations to meet growing business needs.

Difficulty in extracting meaningful insights from vast datasets.

Five steps to take that giant leap!

We break down silos, integrating diverse data sources seamlessly to provide a holistic foundation for comprehensive analysis.

Upgrade your systems with us to ensure improved data accessibility and real-time processing, aligning your infrastructure with the demands of the digital era.

Your data’s safety is our priority. We implement robust security protocols, safeguarding against breaches and ensuring the confidentiality of your valuable information.

We provide scalable infrastructure tailored to accommodate your evolving data requirements, ensuring adaptability as your enterprise grows.

Harness the power of AI/ML through our services, enabling predictive insights and actionable intelligence that propel your business forward.

Our services

Harness the full potential of your data with our comprehensive Data Engineering Solutions. Our expert team specializes in

Modernize your data infrastructure for agility and scalability. Our services include:

Unlock actionable insights and drive strategic decisions through our Data Analytics services

Stay ahead in the era of intelligence with our AI/ML services

Our work

Get evidence-based insights with our accelerators!

Our pre-built IP solutions, or Accelerators, are what sets us apart from our peers in terms of delivering agility and scale, ridding you of the bloat.

teX.ai for all your NLP & LLM requirements

End-To-End Databricks implementation accelerator to streamline your data processes, analytics and management

Data assurance framework with 100% accuracy, synergizing seamlessly to accelerate your engineering roadmap.

We provide data analysis, discovery and interpretation services that our clients love!

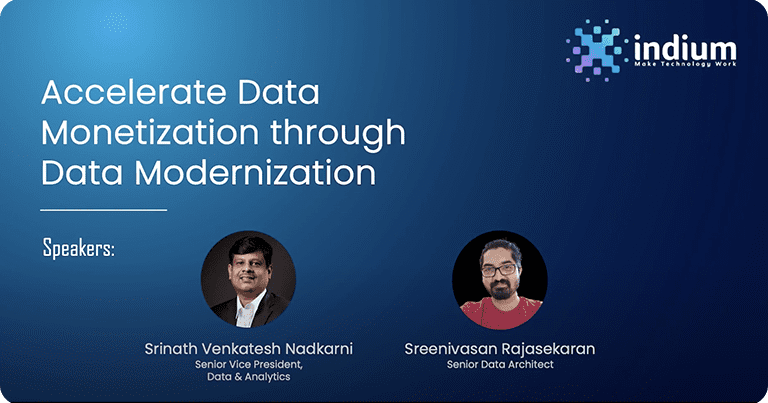

Hear from our experts!

Recent Insights

Contact us

Trusted by

Industry recognitions