Explore Opportunities

Recognitions and Awards

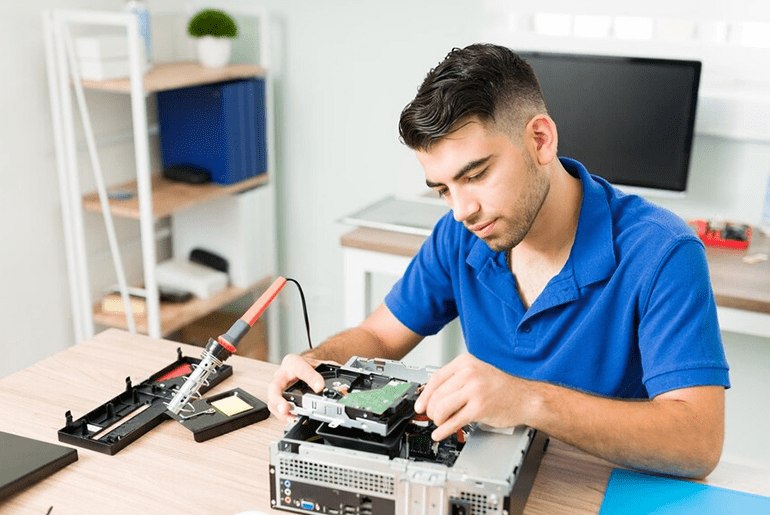

Life at Indium

Over the years, we have built a culture where team efforts create a significant impact and cast a greater influence not only within the company but across the globe.

Get ready to engineer, collaborate, solve & grow with the great minds at Indium, a digital engineering hub for talent across the globe.

Talent Growth Catalyst (EVP)

Meritocratic

growth

→

Global opportunities

in next-gen tech

→

iLD Fueling

Excellence

→

Embracing a People-First,

Hybrid Work Culture

→

Ingram - Stay Engaged, Stay Connected

Our journey at indium is not just about your work; It’s about the people, the experiences, and the knowledge we gain along the way. Join us on social media to stay engaged, connected, and be part of our vibrant and dynamic community.

Build Your Dream Career

Job Features

Job Category

Application Engineering

Job Features

Job Category

Digital Assurance

Job Features

Job Category

Application Engineering

Job Features

Job Category

Application Engineering

Job Features

Job Category

Digital Assurance

Job Features

Job Category

Application Engineering

Job Features

Job Category

Digital Assurance

Job Features

Job Category

Digital Assurance

Job Features

Job Category

Application Engineering

Job Features

Job Category

Application Engineering